DevOps Analytics: Five Steps To Visualise Your Jenkins/UrbanCode Deploy Delivery Pipeline In Action!

So you've got your delivery pipeline all set and delivering releases.

Developers are delivering code into source control, lets say Git as an example, and from there you're doing automated builds in Jenkins, and using IBM's UrbanCode Deploy to automate deployments.

Everyone is happy because releases are getting out the door quicker than before and with less effort! And the automated tests you've added to the pipeline are helping weed out issues earlier, so quality is improving as well.

But one thing that isn't as easy to do is get a sense for what is happening in your pipeline. Both from the point of view of seeing where a particular set of changes are in the pipeline, as well as getting a sense for how well your pipeline is performing.

Enter DevOps Analytics!

By this I mean the following three capabilities:

Developers are delivering code into source control, lets say Git as an example, and from there you're doing automated builds in Jenkins, and using IBM's UrbanCode Deploy to automate deployments.

Everyone is happy because releases are getting out the door quicker than before and with less effort! And the automated tests you've added to the pipeline are helping weed out issues earlier, so quality is improving as well.

But one thing that isn't as easy to do is get a sense for what is happening in your pipeline. Both from the point of view of seeing where a particular set of changes are in the pipeline, as well as getting a sense for how well your pipeline is performing.

Enter DevOps Analytics!

By this I mean the following three capabilities:

- A live data set representing all of the activity that is occurring in your delivery pipeline.

- A way of visualising this data. More specifically the ability to visualise the answers to certain questions of the data set.

- Along with the capability to be able to inspect this data in ways which allow you to draw useful conclusions, as well as to support investigative activities. Essentially this means being able to keep rephrasing the questions you are asking of the data set to make the answers more and more interesting.

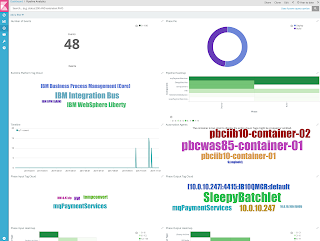

Just to give you a taste, here's an example of the type of dashboarding this allows you to achieve. Read further and you'll see how we created this and what it lets us do!

DevOps Analytics lets us view the flow of code changes through the pipeline, seeing where changes have gone into builds, where those builds have been deployed for testing, what quality checks have been run against those changes, when the build ended up in production, and what state the running system is in.

But importantly, it provides this information in a dynamic fashion, allowing different questions to be asked, and different aspects of the data to be "zoomed in" on.

For example, I might start by asking what builds a change went into. That would let me identify a particular build that I could then focus on. The next question I'd ask is where and when this build had been deployed. I could narrow down the question and ask for deploys to a particular environment, then look at a timeline of those deploys and pick out a deploy I was interested in at a particular point in time. I could then "zoom out" and look at tests that had been run against that environment to see what the quality was like after that deploy. If I find a test failure, I could again zoom out and see what other environments that test was failing in to see if it might be environment specific.

This is all actually quite easy to do!

I've created a solution based on the following components - to great effect.

The combination of ElasticSearch, curl and the ElasticSearch REST API provide the first of the DevOps Analytics capabilities I listed - a live data that represents what is happening in your pipeline.

Kibana provides the second and third capabilities: A way of visualising the data, as well as a way of inspecting the data by asking and rephrasing questions to give increasingly interesting answers.

But importantly, it provides this information in a dynamic fashion, allowing different questions to be asked, and different aspects of the data to be "zoomed in" on.

For example, I might start by asking what builds a change went into. That would let me identify a particular build that I could then focus on. The next question I'd ask is where and when this build had been deployed. I could narrow down the question and ask for deploys to a particular environment, then look at a timeline of those deploys and pick out a deploy I was interested in at a particular point in time. I could then "zoom out" and look at tests that had been run against that environment to see what the quality was like after that deploy. If I find a test failure, I could again zoom out and see what other environments that test was failing in to see if it might be environment specific.

This is all actually quite easy to do!

I've created a solution based on the following components - to great effect.

- ElasticSearch as the datastore for the pipeline data set.

- curl to make REST-based calls to post data to ElasticSearch from the various stages in my pipeline.

- Kibana to allow me to create powerful dashboards that visualise the data, and allow me to filter the views in ways that give me insights into what is happening in the pipeline.

|

| Figure 3 - DevOps Analytics solution components. |

Kibana provides the second and third capabilities: A way of visualising the data, as well as a way of inspecting the data by asking and rephrasing questions to give increasingly interesting answers.

Let me talk you through the steps I followed to get this solution going.

Step 1 - Decide On A Pipeline Data Model

This is the most important step. You need to decide what data you're going to put into your dataset, and how you want to structure it.

Obviously the data model you use for your DevOps Analytics dataset will directly determine the types of questions you can ask of the data. So spend some time thinking about the questions that are important to you and the users of this data. Also its good to think ahead at this stage about which stages in your pipeline you plan on instrumenting to collect data (we'll do that in step 3).

Here are the things that were important to me for my dataset:

- A common model for looking at activity across the entire pipeline.

- Who/what was performing an activity.

- What application/component was involved.

- What technology stack this application/component is from.

- Where the activity was taking place.

- When it was occurring.

- How long the activity took.

- What was the input.

- What change did it produce.

I decided I wanted to base my dataset on events that occur in the pipeline - so the key type in my dataset is that of a Pipeline Event. Figure 4 shows the attributes I decided on for a pipeline event.

I'm still tinkering with this data model, but for the moment it is serving me pretty well so I'll use it as the base for our example.

Step 2 - Provision The Tools

There are two components of what is known as the Elastic Stack that make up my solution - ElasticSearch and Kibana.

From their website...

From their website...

"Elasticsearch is a distributed, RESTful search and analytics engine capable of solving a growing number of use cases. As the heart of the Elastic Stack, it centrally stores your data so you can discover the expected and uncover the unexpected."

"Kibana lets you visualize your Elasticsearch data and navigate the Elastic Stack, so you can do anything from learning why you're getting paged at 2:00 a.m. to understanding the impact rain might have on your quarterly numbers."

To get going quickly, I recommend using the Docker images of these two tools. I used these instructions: How to run ELK stack on Docker Container (IT'z Geek)

Note: You can skip the steps in those instructions for setting up LogStash and Beats for now. They're not necessary to get the basic solution up and running.

Once you're finished with those instructions you should have your two Docker containers running.

And you should be able to access Kibana with your browser.

All we need now is some data :)

Note: You can skip the steps in those instructions for setting up LogStash and Beats for now. They're not necessary to get the basic solution up and running.

Once you're finished with those instructions you should have your two Docker containers running.

|

| Figure 5 - Running containers for ElasticSearch and Kibana. |

And you should be able to access Kibana with your browser.

|

| Figure 6 - Kibana - no data yet! |

The curl tool is usually already available on most Linux servers. But if not, or if you're running a tool on a different platform, you can easily download and install it from here: https://curl.haxx.se/download.html

All we need now is some data :)

Step 3 - Instrument Your Pipeline With Data Emitters

ElasticSearch has a simple REST API that we'll use to post data to our dataset. Easy peasy.

I've referred to using a data emitter to send pipeline activity data to my ElasticSearch datastore. This is really just some scripted logic that uses curl to post the data to the ElasticSearch REST API.

So in full my data emitter consists of:

I've referred to using a data emitter to send pipeline activity data to my ElasticSearch datastore. This is really just some scripted logic that uses curl to post the data to the ElasticSearch REST API.

So in full my data emitter consists of:

- Scripted logic to map activity data to the data model presented in figure 4.

- A JSON template file that is used by this mapping logic to create a data file ready for posting.

- Scripted logic to post the JSON data file to the ElasticSearch REST API using curl.

All of the "scripted logic" mentioned above is in an ANT script at the moment as ANT is a great common denominator across build/deploy automation tools. I'm thinking about doing this in Java in future versions and deploying it as a jar file.

So start by creating a template JSON file to use to post. Mine is shown in figure 7.

Then the "scripted logic" performs two simple steps. First it replace the template values with the values you want to post. In figure 8 you can see my ANT example of this.

Then post the file using curl using a call similar to that shown in figure 9 - again I show my ANT-based example.

|

| Figure 8 - Instantiating our JSON template. |

|

| Figure 9 - Invoking curl to send a message to our ElasticSearch REST API endpoint. |

Now lets add our data emitter to our Jenkins/UCD pipeline.

First add these steps to the build scripts running in your Jenkins build engine. I've added the emitter logic

Then add them to your component processes running in UrbanCode Deploy. In my example, shown in figure 11, I have added my data emitter logic to an existing Ant script that is wrapped as a UCD plugin. So I don't really need to do anything special in UCD. You may need to add an additional step to your component process where you call your data emitter - either wrapped as a plugin, or just call it from a Shell Script step.

|

| Figure 10 - Adding our data emitter to a Jenkins build. |

Then add them to your component processes running in UrbanCode Deploy. In my example, shown in figure 11, I have added my data emitter logic to an existing Ant script that is wrapped as a UCD plugin. So I don't really need to do anything special in UCD. You may need to add an additional step to your component process where you call your data emitter - either wrapped as a plugin, or just call it from a Shell Script step.

|

| Figure 11 - Options for adding your data emitter to a UCD component process. |

With your data emitters in place, run a few builds and deploys and your pipeline will come alive and start putting data into ElasticSearch!

One quick point - you'll notice we didn't create a table space or anything similar in our ElasticSearch repository before putting data into it. In ElasticSearch, your data lives in an index. And when you post your data, all you need to do is specific the index name and it will be created for you should it not already exist. Great :). I called my index pipeline_events.

Also note that for our example I've talked about data coming from Jenkins and UCD, but I've also been adding data emitters to other tools in the pipeline such as test tools and SCM tools.

Now lets start visualising this data in ways that allow us to draw conclusions from it. This means visualising the answers to our questions on what is happening in our pipeline.

For this you use a timeline visualization which plots the amount of activity happening during a specific period as a line on a graph. In the example shown in figure 14 we can see there were 10 events in the pipeline some point before 2017-09-23.

If I zoom in on the timeline (see figure 15) I can see a breakdown on exactly when these 10 events occurred. I can see that most of the activity occurred between 16:00 and 16:30, with a isolated event happening later on at around 17:25.

One quick point - you'll notice we didn't create a table space or anything similar in our ElasticSearch repository before putting data into it. In ElasticSearch, your data lives in an index. And when you post your data, all you need to do is specific the index name and it will be created for you should it not already exist. Great :). I called my index pipeline_events.

Also note that for our example I've talked about data coming from Jenkins and UCD, but I've also been adding data emitters to other tools in the pipeline such as test tools and SCM tools.

Step 4 - Visualise The Data That Is Rolling In

Open up Kibana, and you should see data building up. Fantastic! |

| Figure 12 - DevOps Analytics data building up. |

The actual visualisations you create will be very much dependant on the data model you decided on in step 1. I'll provide a few basic examples to get you started. For each I'll state the question being asked, and then how to visualise the answer.

Tip: What I've found is that once you start putting these visualisations together you tend to think of new questions to ask and then make adjustments to your data model to provide the data that drives new visualisations. So definitely approach this as an iterative exercise.

4.1 Question 1 - What Activity Is Happening In My Pipeline?

This is a very basic place to start. We all know that the pipeline covers many steps. Where is all the action happening? I've referred to this as the pipeline phase in my data model (see figure 4).

We'll use a simple donut chart visualization for this that shows a break-down of the activity in the pipeline by pipeline phase. In the example in figure 13 you can see the green section shows deploys, the blue section shows builds, and the purple section shows automated tests. As you'd expect, we do more deploys than builds. Hovering over the green section we discover we've done 25 deploys which represents 48.08% of the activity in our pipeline. In this example we can also see that there aren't many automated tests being run.

We'll use a simple donut chart visualization for this that shows a break-down of the activity in the pipeline by pipeline phase. In the example in figure 13 you can see the green section shows deploys, the blue section shows builds, and the purple section shows automated tests. As you'd expect, we do more deploys than builds. Hovering over the green section we discover we've done 25 deploys which represents 48.08% of the activity in our pipeline. In this example we can also see that there aren't many automated tests being run.

|

| Figure 13 - A "donut chart" showing the break-down of activity in the pipeline. |

4.2 Question 2 - When Did This Activity Happen?

Most important we'll want to consider when this activity is happening. Referring again to figure 4, this is represented in my data model using the start and end timestamps. This will allow us to ask time-based questions of our data set. You'll see later in step 5 how this becomes very useful, but for know lets just note that we'll be visualising the amount of activity that is happening, and when it is happening.For this you use a timeline visualization which plots the amount of activity happening during a specific period as a line on a graph. In the example shown in figure 14 we can see there were 10 events in the pipeline some point before 2017-09-23.

|

| Figure 14 - A timeline showing the amount of activity in the pipeline for a period. |

|

| Figure 15 - "Zoomed in" view showing time of events across a shorter period. |

4.3 Question 3 - What Technologies Are Involved In This Activity?

There are two aspects of this. We'll start by considering the application runtime technologies i.e. the technologies that the apps you are building and deploying run on.

Most teams start by setting up a delivery pipeline that supports a single application development language/technology - commonly just pure simple Java. But the more interesting ones apply these same concepts to all of the application development technologies that form part of their complete Enterprise Architecture stack.

So assuming our pipeline extends to cover all of these, we'll want to be able to ask the question of which application runtime technology the activity in our pipeline is related to. (As an aside, you may think of these as separate pipelines but we'll want them all covered by our DevOps Analytics so we can ask interesting questions that span the runtime technology boundaries.)

This information is represented in my data model as runtimePlatform. To visualize this, we'll use a tag cloud. Figure 16 shows that our pipeline is building, deploying and testing applications for IBM Integration Bus, IBM WebSphere Liberty, and IBM BPM (Core and AAIM). The relative sizes of each platform name gives an indication as to how much activity there has been in the pipeline for that platform.

For the second aspect of this, lets look at the technoogies/tools that make up our pipeline. Consider that for the pipeline that we're focusing on in our example we've already mentioned three technologies: Git, Jenkins and UrbanCode Deploy.

So lets say that we'd also like to ask the question of which of these technologies is involved in the activity in our pipeline. Figure 17 shows that our pipeline activity is occurring in Jenkins and UrbanCode deploy.

Most teams start by setting up a delivery pipeline that supports a single application development language/technology - commonly just pure simple Java. But the more interesting ones apply these same concepts to all of the application development technologies that form part of their complete Enterprise Architecture stack.

So assuming our pipeline extends to cover all of these, we'll want to be able to ask the question of which application runtime technology the activity in our pipeline is related to. (As an aside, you may think of these as separate pipelines but we'll want them all covered by our DevOps Analytics so we can ask interesting questions that span the runtime technology boundaries.)

This information is represented in my data model as runtimePlatform. To visualize this, we'll use a tag cloud. Figure 16 shows that our pipeline is building, deploying and testing applications for IBM Integration Bus, IBM WebSphere Liberty, and IBM BPM (Core and AAIM). The relative sizes of each platform name gives an indication as to how much activity there has been in the pipeline for that platform.

|

| Figure 16 - A tag cloud of runtime platforms that are being serviced by the pipeline. |

So lets say that we'd also like to ask the question of which of these technologies is involved in the activity in our pipeline. Figure 17 shows that our pipeline activity is occurring in Jenkins and UrbanCode deploy.

|

| Figure 17 - A tag cloud of pipeline tools servicing our pipeline. |

4.4 Putting This All Together On A Dashboard

Now that we've got all these great visualisations to help us see the answers to our questions, lets put them all together on a dashboard.

Step 5 - Asking Interesting Questions Of Your Data

Now for the fun part.

OK, so you've got a great dashboard that provides you with a summary of all the activity happening in your pipeline.

Thats useful on its own, but lets start asking some more specific questions of our data.

Firstly lets say we want to know more about what has been happening during a specific time period on a specific day. Zoom in on your timeline and select the period and notice that now all of the visualisations have refreshed to provide answers to this new question - namely, what has been happening in my pipeline during this specific period. Aha, so my dashboard is dynamic!

Figure 19 shows that we've zoomed in on 7 IBM Integration Bus events that all occurred between 13:06 and 13:24 on the 17th October.

Figure 19 shows that we've zoomed in on 7 IBM Integration Bus events that all occurred between 13:06 and 13:24 on the 17th October.

|

| Figure 19 - Zoom in to a specific period on the timeline. |

Lets now say we only care about finding a specific build. So click on Build in your donut chart, and notice that again everything refreshes. We're left with 3 builds that occurred between 13:06 and 13:16.

Lets say you care about a specific component, so lets select the soapPaymentServices component and you'll see it was involved in a Build at around 13:14, which ran on the pbciib10-container-02 build agent.

|

| Figure 20 - Zoom in on just build events. |

|

| Figure 21 - Zoom in on the soapPaymentServices component. |

This short scenario only really scratches the surface of the kinds of analytics you can do with your data. By now you should be getting the sense for the real value in this solution: Being able to keep rephrasing the question we're asking, and then immediately get an updated answer across all of our visualisations, provides a powerful way to inspect our data set.

Summary

DevOps Analytics can give you fantastic insights into what is going on in your end-to-end delivery pipeline.

The solution I've presented puts in place a tool that allows all interested parties - developers, architects, stakeholders, release engineers, testers - to take a look into the pipeline and see how changes are flowing, see where activity is taking place, look for potential bottlenecks or areas for improvement, and generally allows them to visualise what is taking place.

Making this data available to everyone empowers them in ways that were not possible previously.

Try out the steps suggested in the blog post and visualize what is going on in your own delivery pipeline! Please do let me know how you get on.

Try out the steps suggested in the blog post and visualize what is going on in your own delivery pipeline! Please do let me know how you get on.

I look forward to exploring this subject more in future blog posts. Please provide feedback if you found this interesting, and don't forget to follow @continualoop to hear more about my thoughts on improving software development and delivery. Let's improve IT!

Copyright © Continualoop Blog 2017. All rights reserved.

Copyright © Continualoop Blog 2017. All rights reserved.

very useful blog to learner so happy to be part in this blog. Thank you.

ReplyDeleteDevOps Training in Hyderabad

Thanks for the wonderful blog content.

ReplyDeletePHP Training in Chennai

DOT NET Training in Chennai

Big Data Training in Chennai

Hadoop Training in Chennai

Android Training in Chennai

Selenium Training in Chennai

Digital Marketing Course in Chennai

JAVA Training in Chennai

Selenium training institute in Chennai

This is my 1st visit to your web... But I'm so impressed with your content. Good Job!

ReplyDeleteDevOps Training in Pune

ReplyDeleteThanks for the well-written post and I will follow your updates regularly and this is really helpful. Keep posting more like this.

DevOps Training in Chennai

DevOps Certification in Chennai

AWS Training in Chennai

AWS course in Chennai

Cloud Computing Training in Chennai

Cloud Computing Courses in Chennai

DevOps Training in Velachery

DevOps Training in Tambaram

DevOps course in Chennai

Wonderful content on recent updates, waiting to read the next part of your article.

ReplyDeleteSpoken English Classes in Chennai

German Classes in Chennai

Java Training in Chennai

DevOps Training in Chennai

DevOps certification

DevOps Training

ReplyDeleteThanks for sharing the post about the technology which i looking for.It gives more knowledge through the post and about the future jobs

Devops Training in T Nagar

Devops Training in Tambaram

Devops Training in Velachery

Devops Training in Chennai

Devops Certification in Chennai

AWS Course in Velachery

AWS Training in T Nagar

AWS Training in OMR

ReplyDeleteGreat post and more informative!keep sharing this!

film institute in chennai

film college in chennai

videography courses in chennai

video editing course in chennai

part time film making courses in chennai

cinematography courses in chennai

Thanks for splitting your comprehension with us. It’s really useful to me & I hope it helps the people who in need of this vital information.

ReplyDeleteDevOps Training | Certification in Chennai | DevOps Training | Certification in anna nagar | DevOps Training | Certification in omr | DevOps Training | Certification in porur | DevOps Training | Certification in tambaram | DevOps Training | Certification in velachery

"Nice article

ReplyDeleteDigital Marketing Training Course in Chennai | Digital Marketing Training Course in Anna Nagar | Digital Marketing Training Course in OMR | Digital Marketing Training Course in Porur | Digital Marketing Training Course in Tambaram | Digital Marketing Training Course in Velachery

"

ReplyDeleteThe strategy you have posted on this technology helped me to get into the next level and had lot of information in it. The angular js programming language is very popular which are most widely used.

Dot Net Training in Chennai | Dot Net Training in anna nagar | Dot Net Training in omr | Dot Net Training in porur | Dot Net Training in tambaram | Dot Net Training in velachery

Whatever we gathered information from the blogs, we should implement that in practically then only we can understand that exact thing clearly, but it’s no need to do it, because you have explained the concepts very well. It was crystal clear, keep sharing..

ReplyDeleteoracle training in chennai

oracle training in tambaram

oracle dba training in chennai

oracle dba training in tambaram

ccna training in chennai

ccna training in tambaram

seo training in chennai

seo training in tambaram

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site

ReplyDeleteoracle training in chennai

oracle training in annanagar

oracle dba training in chennai

oracle dba training in annanagar

ccna training in chennai

ccna training in annanagar

seo training in chennai

seo training in annanagar

Outstanding blog post, I have marked your site so ideally I’ll see much more on this subject in the foreseeable future.

ReplyDeletesap training in chennai

sap training in velachery

azure training in chennai

azure training in velachery

cyber security course in chennai

cyber security course in velachery

ethical hacking course in chennai

ethical hacking course in velachery

I appreciate your blog writing about that specific topics.I am following your blog post regularly to get more updates..

ReplyDeletejava training in chennai

java training in omr

aws training in chennai

aws training in omr

python training in chennai

python training in omr

selenium training in chennai

selenium training in omr

This comment has been removed by the author.

ReplyDelete