Simplify Software Delivery Using Containers and the Cloud

Wouldn’t it be great if your teams could just focus on the coding? After all, we want to be innovative and write great software!

Unfortunately, most really interesting software requires you to think about other things.

At the same time, “consumer-focused” software has become a lot more interesting. No longer just desktop applications. Think of all the mobile applications on your phone. Think of the social media platforms you interact with. Think of the Cloud-hosted solutions you consume. So, both consumer-focused software as well as business software now has a lot of complexity to deal with.

How is all of this back-story relevant to containers and the Cloud?

The short answer is that each has become a foundational component of a new, modern type of software platform which, like the mainframe in times past, allows our teams to focus on the task of writing software that is simpler to develop, deliver and operate.

Let’s start with containers, which (at least in their Docker form) burst onto the scene in a big way in 2014. How have containers helped?

Most importantly, containers have given us a standardized way of handling packaging and delivery of application components with the bold claim of “Build, Ship and Run Any App. Anywhere.”

Install Docker on any host machine (Windows, Linux, MacOs). We all know the basic docker commands.

The most immediate benefit that draws most people to Docker is the speed with which you can distribute and start-up a running “dockerized” application. Just executing a docker run command specifying a docker image in your registry will automatically pull down the image and start up a running instance. The software will download quicker because Docker images are substantially smaller than an equivalent virtual machine image. And the software starts up quicker because Docker images start up substantially quicker than virtual machine images.

All of this makes it incredibly quick to reliably distribute new versions of software.

Each image/container is totally isolated from the other. This means that on a Docker host machine, you can download a variety of different bits of software without ever having to worry about them having incompatible dependencies.

This also means that shipping an application component as a container means that the implementation choices made by the developers of that component are isolated from other components. This means developers can focus on making the appropriate implementation choices for their specific component, without having to worry about the knock-on effect on all other components in the software system. This is a really big deal. It allows you to be more innovative in the technologies you use – allowing you to experiment safely. This safety allows your teams to evolve their software to make use of new technologies and frameworks.

Dockerfiles allow you to codify all of the steps that you would normally manually perform to install your application on a host server. Updates to the operating system. Installation of tools, libraries and application server software. Installation and configuration of the application.

Just having this recipe written down somewhere is hugely useful as it helps in understanding the various dependencies that your application has.

But more than that, it means that modifying the install is so much easier. Just update the Dockerfile, re-run the docker build command, and Docker will create a new image with your changes in. Also, tags allow you to keep multiple versions of your image should you wish to support multiple versions of your application and its configuration.

The fact that this is all driven by a simple text file means that the “recipe” can be placed in source control, which means that now you can track all the changes that you make to the application, its dependencies, its configuration, and its underlying platform. This is immensely valuable for troubleshooting issues.

This also means that we can set up CI/CD pipelines that deploy more than just application changes. Now your entire application image with all its dependencies and configuration go through your CI/CD pipelines meaning that you can push out changes to test and production environments pretty quickly.

This means it is so much easier to experiment with software by pulling it down from DockerHub and running it on your desktop. I was able to get up and running with the entire ElasticStack set of software on my desktop within minutes of reading about it. And then when I was finished, I could just run a docker rm command to remove the container with the application, its data, and all of its dependencies. Simple, neat and tidy.

The code executed locally on your desktop in exactly the same way as it will be run in your test environments and in production. Again, with all of its dependencies and configuration packaged in. It removes the “well it works on my machine” class of problems.

Now that we've heard about all the great benefits of packaging your applications in containers, let’s move our considerations to the Cloud. As we’ll see, containers pop up here as well.

Containers provide us with a simple model for removing the hassle of reliably building and shipping our software components.

Cloud-enabling platform services such as Kubernetes greatly simplify scaling applications, achieving near-zero downtime, hosting platform services such as logging and monitoring, and achieving the best utilization of your resources, whether they are in the Cloud or in your own datacentre.

Given all these benefits, why would you want to do things any other way? Welcome to the future - it is now!

Copyright © Continualoop Blog 2018. All rights reserved.

Unfortunately, most really interesting software requires you to think about other things.

- How do you package up and deliver all the components of the solution

- How do you take care of all of the dependencies?

- How do you make sure the end-to-end solution is scalable?

- How do you make sure that the solution is easy to manage?

Answering these questions requires you to consider a lot more than just “coding the logic”.

In times past, the only software that had these sorts of demands was large scale business IT systems. And to meet these demands they made use of a great platform: the mainframe.

Unfortunately, the mainframe did a lot of this at the expense of choice. The software had limitations – all UIs tended to be of the “green-screen” variety. All your code was written in Cobol. Integration was with CICS, as was data storage and retrieval. But you could mostly focus on your business logic, and the platform took care of things like build and deploy, dependency management, integration, data, scalability and operational needs.

In times past, the only software that had these sorts of demands was large scale business IT systems. And to meet these demands they made use of a great platform: the mainframe.

Unfortunately, the mainframe did a lot of this at the expense of choice. The software had limitations – all UIs tended to be of the “green-screen” variety. All your code was written in Cobol. Integration was with CICS, as was data storage and retrieval. But you could mostly focus on your business logic, and the platform took care of things like build and deploy, dependency management, integration, data, scalability and operational needs.

Containers and cloud have become foundational components of a new, modern type of software platform.Now when I started writing this post, I certainly didn't set out to make a case for the mainframe. But it is certainly interesting to consider that with the explosion of choice brought about in the distributed world, business IT systems got a lot more complex and had to make do without a standardised platform. With PC-based servers being stuck into data centres alongside the mainframe, there was an explosion of choice in terms of operating systems, development languages and application and data server platforms.

At the same time, “consumer-focused” software has become a lot more interesting. No longer just desktop applications. Think of all the mobile applications on your phone. Think of the social media platforms you interact with. Think of the Cloud-hosted solutions you consume. So, both consumer-focused software as well as business software now has a lot of complexity to deal with.

How is all of this back-story relevant to containers and the Cloud?

The short answer is that each has become a foundational component of a new, modern type of software platform which, like the mainframe in times past, allows our teams to focus on the task of writing software that is simpler to develop, deliver and operate.

Let’s start with containers, which (at least in their Docker form) burst onto the scene in a big way in 2014. How have containers helped?

Most importantly, containers have given us a standardized way of handling packaging and delivery of application components with the bold claim of “Build, Ship and Run Any App. Anywhere.”

Install Docker on any host machine (Windows, Linux, MacOs). We all know the basic docker commands.

- docker build takes a simple text Dockerfile recipe and uses it to build a Docker image containing our app and all its configuration and dependencies.

- docker push lets us share this image by uploading it to a registry (such as Dockerhub), from where it can be downloaded using a simple docker pull.

- docker run lets us run the application, starting up a container based on the image that contains the running software application.

The most immediate benefit that draws most people to Docker is the speed with which you can distribute and start-up a running “dockerized” application. Just executing a docker run command specifying a docker image in your registry will automatically pull down the image and start up a running instance. The software will download quicker because Docker images are substantially smaller than an equivalent virtual machine image. And the software starts up quicker because Docker images start up substantially quicker than virtual machine images.

All of this makes it incredibly quick to reliably distribute new versions of software.

Each image/container is totally isolated from the other. This means that on a Docker host machine, you can download a variety of different bits of software without ever having to worry about them having incompatible dependencies.

This means developers can focus on making the appropriate implementation choices for their specific component, without having to worry about the knock-on effect on all other components in the software system.This is great if you use Docker to allow yourself to experiment with software on your desktop machine without ever having to worry about any of the dependencies leaking out onto your host machine - or ever getting stuck where two bits of software use different versions of the same package or library or other piece of software. This used to be a major headache in managing applications. Docker solves this neatly.

This also means that shipping an application component as a container means that the implementation choices made by the developers of that component are isolated from other components. This means developers can focus on making the appropriate implementation choices for their specific component, without having to worry about the knock-on effect on all other components in the software system. This is a really big deal. It allows you to be more innovative in the technologies you use – allowing you to experiment safely. This safety allows your teams to evolve their software to make use of new technologies and frameworks.

Dockerfiles allow you to codify all of the steps that you would normally manually perform to install your application on a host server. Updates to the operating system. Installation of tools, libraries and application server software. Installation and configuration of the application.

Just having this recipe written down somewhere is hugely useful as it helps in understanding the various dependencies that your application has.

But more than that, it means that modifying the install is so much easier. Just update the Dockerfile, re-run the docker build command, and Docker will create a new image with your changes in. Also, tags allow you to keep multiple versions of your image should you wish to support multiple versions of your application and its configuration.

The fact that this is all driven by a simple text file means that the “recipe” can be placed in source control, which means that now you can track all the changes that you make to the application, its dependencies, its configuration, and its underlying platform. This is immensely valuable for troubleshooting issues.

This also means that we can set up CI/CD pipelines that deploy more than just application changes. Now your entire application image with all its dependencies and configuration go through your CI/CD pipelines meaning that you can push out changes to test and production environments pretty quickly.

Simple, neat and tidy. It removes the “well it works on my machine” class of problems.Let’s talk about Docker's “Run Any App, Anywhere” claim. Before Docker it used to be that there were certain approaches approach, tools and workflow for running your application component would often differ depending on whether you were running it in a local desktop or running it on a production server. Now you can run the very same image on your Windows or Mac desktop, on your bare-metal Linux server, in a virtual machine in your data centre, or even in the Cloud (take your pick of cloud). It just runs anywhere, and with the exact same functional behaviour.

This means it is so much easier to experiment with software by pulling it down from DockerHub and running it on your desktop. I was able to get up and running with the entire ElasticStack set of software on my desktop within minutes of reading about it. And then when I was finished, I could just run a docker rm command to remove the container with the application, its data, and all of its dependencies. Simple, neat and tidy.

The code executed locally on your desktop in exactly the same way as it will be run in your test environments and in production. Again, with all of its dependencies and configuration packaged in. It removes the “well it works on my machine” class of problems.

Now that we've heard about all the great benefits of packaging your applications in containers, let’s move our considerations to the Cloud. As we’ll see, containers pop up here as well.

Kubernetes is a container orchestration platform that builds on top of Docker - allowing large numbers of containers to scale and work together, reducing operational burden.

Initially, the kinds of technologies that underpin the Cloud focused on two fundamental benefits:

- Freeing up your IT team from having to maintain infrastructure (compute, storage, databases and networking resources)

- Making your infrastructure (and therefore applications) infinitely flexible and scalable.

Think virtual machines, storage, and networking resources hosted outside of your data centre, with tools that allow you to create and operate these resources remotely as easily as if they were in your data centre. Add to that a billing model that bills you for consumption of these resources and there you have the fundamentals of today’s big Cloud players.

With the advent of containers, and container orchestration solutions, all the major Cloud players added Cloud platform services around Docker and Kubernetes to help customers move their applications to the Cloud.

In short, Kubernetes is a container orchestration platform that builds on top of Docker - allowing large numbers of containers to scale and work together, reducing operational burden.

Install Kubernetes on a group of machines (either bare metal or virtual) known as a cluster of nodes. One of the nodes will be the master – the others are workers, which is where your containers will run.

The fundamental unit in Docker at runtime is a container - and the equivalent in Kubernetes is a pod, A pod is made up of a set of Docker containers that are deployed together, started and stopped together, and share storage and network.

Let's get a feel for what our Kubernetes cluster allows us to do.

- kubectl get nodes provides you with an overview of the nodes in your cluster, with their status and how long they have been running for.

- kubectl describe node lets you zoom in on a specific node and provides a full report on its configuration, role, capacity, how it is doing in terms of resources (disk, memory, processes), what kind of host it is running on, and what its workflow of pods is with their CPU and memory behaviour and limits.

- kubectl create pod lets you instantiate a pod using a simple text file description (in YAML format) of its containers and the resources they need. kubectl get pods lets you see all the pods in your cluster (across all nodes).

Let’s pause briefly and understand that pods can be scaled (multiple instances) using a replica set.

- kubectl create replicaset will start up a specified number of instances of a pod - distributed across your cluster based on where it makes most sense. You can then update the number of pods by adjusting the replica set.

Let’s further understand that a deployment will allow us to do a rolling update of replica sets.

- kubectl create deployment will create a deployment in your cluster that will start up a set of pods/replica sets. Adjusting the deployment will allow you to achieve rolling updates i.e. it will coordinate spinning down the old pods/replica sets and spinning up new replacement pods/replica sets.

So, having taken a look at a few of the Kubernetes commands, we can see it gives us simple to use tools to manage our cluster of machines, as well as manage and scale the application components deployed to that cluster. Let's understand the benefits this brings us.

Let’s start by considering that you no longer have to worry about which physical machine your application components end up running on.

This is a big deal! Applications and their load are constantly changing, but now the platform can decide for us how to best utilise our resources across all of our applications and their changing workload requirements.

Now let's understand that we have a common way of scaling any and all of our application components - irrespective of the development language, frameworks and application platform that each of them has been implemented with.

This removes a lot of potential complexity when considering your systems end-to-end. This consistency also allows the platform to make trade-offs between different types of application components (maybe front-end vs back-end) when it comes to scaling and provisioning instances of the component.

Without any downtime for the users. This makes life easier for our operations teams whose reason for existence is to keep the application up and providing a good quality of service.

Each pod has a mechanism for reporting its "health" back to the platform. This allows the platform to detect when a process has died, or the pod is misbehaving in any way, and allows the platform to un-provision the bad pod and spin up a replacement.

It is at this point that you will appreciate you much more robust your applications have become. And again, because the platform takes care of this in a standardised way, we haven't introduced a lot of additional complexity for our developers.

When a cluster does start running short on resources, extending your infrastructure is dead simple. The new machine (bare metal or virtual) has the worker node software installed, it gets registered with the cluster, and immediately it can be made available to start taking on some of the load from the existing nodes. Many cloud providers provide the underlying services that will allow the cluster to do this automatically.

Importantly, let's note that the cluster can do this without any service outage. Pods are removed from existing nodes and moved to the new node without any downtime for the users. This makes life easier for our operations teams whose reason for existence is to keep the application up and providing a good quality of service.

Pods, replica sets and deployments (and all other resources in the cluster) are all created from simple text file definitions.

This has a number of benefits. It is easier to understand each application component and all of its resource needs. We can put them into source control and include them in CI/CD pipelines. And Kubernetes allows these CI/CD pipelines to easily achieve advanced deployment practices such as blue/green deploys, canary releases and deploys to support A/B testing.

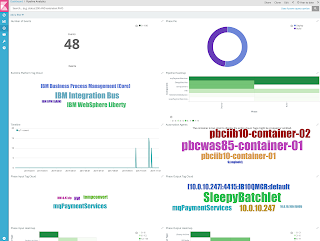

In addition to providing a fantastic platform for running your application containers, it is also a fantastic platform for running operations tools to manage your applications. Commonly you’ll have containers running for services such as logging (for example, using the Elastic Stack) and monitoring (commonly using Prometheus).

This makes it easier to create richer application platforms, where the additional platform components benefit from the same scalability, robustness, and flexibility as your application components - and using common mechanisms.

Welcome to the future - it is now!As a reminder of where we started: we all want to be able to focus on innovation and writing great software.

Containers provide us with a simple model for removing the hassle of reliably building and shipping our software components.

Cloud-enabling platform services such as Kubernetes greatly simplify scaling applications, achieving near-zero downtime, hosting platform services such as logging and monitoring, and achieving the best utilization of your resources, whether they are in the Cloud or in your own datacentre.

Given all these benefits, why would you want to do things any other way? Welcome to the future - it is now!

Comments

Post a Comment